Benchmarking Nemotron 3 Nano 4B on the Jetson Orin Nano Super

Antwon · March 24, 2026 · 10 min read

Introduction

NVIDIA just released Nemotron 3 Nano 4B, their latest small-language model (SLM). Let's try running it on their $250 edge AI computer, the Jetson Orin Nano Super!

Prerequisites

You must have correctly set up your Jetson's operating system and upgraded to Jetpack 6.2. I personally am booting from an SSD -- I believe I followed this tutorial when doing so.

Hardware and Software

- Device: NVIDIA Jetson Orin Nano Super 8GB

- Storage: 512GB NVMe M.2 SSD

- Power mode: MAXN_SUPER

- Inference engine: llama.cpp b8304

- CUDA: 12.6

- Flash Attention: Enabled

Methodology

The Nano 4B in its natural form won't be able to fit within the Jetson's 8 gigabytes of memory, so we have to quantize it. Thankfully, Unsloth and NVIDIA have both provided quants for us to try out.

Between each quant's benchmark, I kill the existing server, drop page caches, load the next model, and send a thoraway 10-token request to warm it up.

Then I send the actual benchmark, requesting a 256-maximum-token output:

Explain in detail the history of computing from the invention of the abacus through modern quantum computers. Cover key milestones including mechanical calculators, vacuum tubes, transistors, integrated circuits, microprocessors, the internet, and artificial intelligence. Discuss the contributions of notable figures such as Charles Babbage, Ada Lovelace, Alan Turing, John von Neumann, and others.

This prompt was relatively arbitrary, and it was generated with Claude Code. If you are testing Nemotron on your own Jetson, feel free to ask it whatever you'd like.

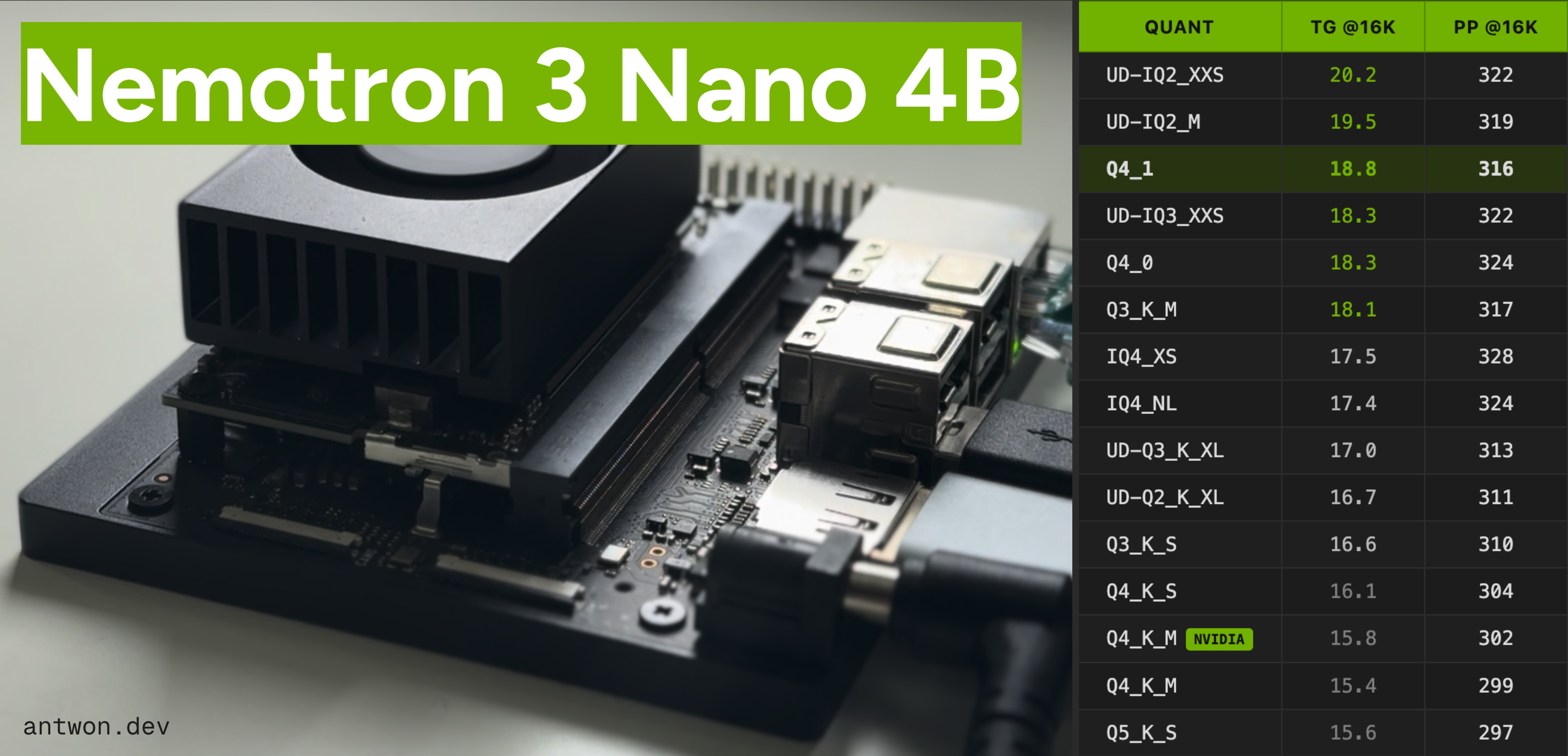

Benchmark Results

15 GGUF quantizations of Nemotron 3 Nano 4B on the Jetson Orin Nano Super, sorted by generation speed.

Gen = generation tok/s. PP = prompt processing tok/s. RAM in MiB. All GGUFs from unsloth unless marked.

| # | Quant | Size | BPW | RAM | 4K Gen | 8K Gen | 16K Gen | 4K PP | 8K PP | 16K PP |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | UD-IQ2_XXS | 2.03 GiB | 4.38 | 1,804 | 20.2 | 20.2 | 20.2 | 322 | 322 | 322 |

| 2 | UD-IQ2_M | 2.13 GiB | 4.61 | 1,915 | 19.6 | 19.6 | 19.5 | 319 | 320 | 319 |

| 3 | Q4_1 | 2.51 GiB | 5.43 | 2,329 | 18.8 | 19.1 | 18.8 | 317 | 317 | 316 |

| 4 | UD-IQ3_XXS | 2.22 GiB | 4.79 | 2,002 | 18.7 | 18.6 | 18.3 | 323 | 322 | 322 |

| 5 | Q4_0 | 2.35 GiB | 5.07 | 2,183 | 18.4 | 18.4 | 18.3 | 324 | 325 | 324 |

| 6 | Q3_K_M | 2.29 GiB | 4.95 | 2,123 | 18.2 | 18.2 | 18.1 | 318 | 318 | 317 |

| 7 | IQ4_XS | 2.36 GiB | 5.11 | 2,150 | 17.5 | 17.6 | 17.5 | 328 | 328 | 328 |

| 8 | IQ4_NL | 2.38 GiB | 5.15 | 2,172 | 17.4 | 17.4 | 17.4 | 324 | 324 | 324 |

| 9 | UD-Q3_K_XL | 2.49 GiB | 5.39 | 2,257 | 17.1 | 17.1 | 17.0 | 313 | 313 | 313 |

| 10 | UD-Q2_K_XL | 2.32 GiB | 5.03 | 2,087 | 16.7 | 16.7 | 16.7 | 311 | 310 | 311 |

| 11 | Q3_K_S | 2.19 GiB | 4.73 | 2,019 | 16.6 | 16.6 | 16.6 | 310 | 310 | 310 |

| 12 | Q4_K_S | 2.63 GiB | 5.69 | 2,424 | 16.1 | 16.1 | 16.1 | 305 | 304 | 304 |

| 13 | Q4_K_MNVIDIA | 2.63 GiB | 5.70 | 2,429 | 15.9 | 15.9 | 15.8 | 302 | 302 | 302 |

| 14 | Q4_K_M | 2.69 GiB | 5.82 | 2,489 | 15.6 | 15.4 | 15.4 | 302 | 300 | 299 |

| 15 | Q5_K_S | 2.89 GiB | 6.25 | 2,665 | 15.6 | 15.6 | 15.6 | 298 | 296 | 297 |

Sample Output

Here's a response that it gave me when asked about its creator. Click the dropdowns to see its reasoning & answer.

Tell me about NVIDIA.

Thinking

Okay, the user asked me to tell them about NVIDIA. Hmm, this is a pretty broad question, so I need to figure out what level of detail they want. Are they a student researching for a project? An investor looking for company info? Or just someone casually curious? Since they didn't specify, I'll assume they want a general overview but with some key points that matter to different audiences.

First, I should cover the basics: who they are, what they do, and why they matter. NVIDIA is a big name in tech, especially in GPUs and AI. But I shouldn't just list facts—I need to explain why their products are revolutionary. Like, the GPU shift from gaming to AI and data centers is a huge pivot. That's probably the most interesting angle.

Wait, the user might not know what GPUs are. Should I define that? Maybe briefly: "Graphics Processing Units for parallel computing." But since it's a tech term, I'll assume they've heard of it. Focus on their impact.

Key points to include: Founded in 1993 by Jensen Huang (got that name right—Jensen Huang, not "Jensen" as a typo). Started with gaming GPUs (GeForce series), but now dominates AI and data centers. Why it matters: Without NVIDIA's GPUs, modern AI like ChatGPT wouldn't exist. They're the engine behind AI training. Products: RTX series for gaming, A100/H100 for data centers. Recent buzz: Blackwell chips for next-gen AI.

But I shouldn't just list products. Need to connect it to real-world impact. Example: "NVIDIA's GPUs process billions of calculations per second, enabling AI to learn from massive datasets." That makes it tangible.

Also, watch out for common misconceptions. Some think NVIDIA is only about gaming. Gotta clarify their pivot to AI. Maybe mention their data center revenue is now larger than gaming—shows their strategic shift.

User might wonder about competitors. AMD and Intel are trying to catch up, but NVIDIA has a clear lead in AI. Should I note that? Yes, briefly: "While competitors exist, NVIDIA's ecosystem (CUDA, drivers) is unmatched."

Deep needs? If they're asking casually, they might want to understand why NVIDIA's stock is so hot or why AI boom is tied to them. But since they didn't ask, I'll stick to fundamentals. Avoid jargon like "CUDA" unless I explain it ("NVIDIA's software platform for AI development").

Structure the answer: 1. Who/when: Founded 1993, headquartered in Santa Clara. 2. What: GPUs, now AI/data center focus. 3. Why it's big: AI revolution, data center revenue growth. 4. Key products: GeForce, RTX, A100. 5. Impact: Without them, no modern AI. 6. Recent moves: Blackwell chips.

Avoid going too deep into history—like their early struggles with the 486 chip. That's irrelevant now. Stay focused on current relevance.

Also, check for accuracy: Founded 1993? Yes. Jensen Huang? Yes. Data center revenue now bigger than gaming? As of 2023, yes—NVIDIA reported $11B data center revenue in 2023 vs. $10B gaming. Good.

Should I mention their stock price? Maybe not unless asked. User didn't ask for financials.

Finally, wrap up with why it matters: NVIDIA isn't just a company; they're a catalyst for AI. That's the big takeaway.

Double-check: Did I cover "why NVIDIA matters" clearly? Yes—by linking to AI progress.

Okay, draft in mind: Opening: "NVIDIA is a leading tech company..." Then the pivot to AI. Products as examples. Impact statement.

Avoid markdown, keep it conversational. User said "tell me," so it's a chat, not a formal report.

Wait, is "you'll find" too informal? Maybe "NVIDIA's GPUs..." is fine.

Alright, time to write.

Response

NVIDIA Corporation is a leading technology company founded in 1993 by Jensen Huang (a former engineer at Xerox PARC). Headquartered in Santa Clara, California, it revolutionized computing by pioneering graphics processing units (GPUs) and later becoming the engine behind the AI revolution. Here's a concise breakdown of what makes NVIDIA so significant:

Core Identity & Evolution

- Origins: Started in 1993 as a gaming-focused GPU company (e.g., GeForce series for 3D gaming).

- Pivot: Shifted focus to data centers and AI starting in the 2010s. Today, AI/data center revenue dominates (over 80% of total revenue), while gaming is now a secondary market.

- Why it matters: Without NVIDIA's GPUs, modern AI (like ChatGPT, image generators, and self-driving cars) wouldn't exist. Their hardware is the backbone of AI training and inference.

Key Products & Technologies

- GPUs (Graphics Processing Units):

- Originally for gaming, now used for parallel computing (e.g., rendering, physics simulations).

- RTX Series: Gaming-focused (e.g., RTX 4090), but also accelerates AI workloads via ray tracing and DLSS (deep learning superresolution).

- Data Center AI Chips:

- A100/H100: High-performance GPUs for AI training/inference (e.g., training large language models).

- Blackwell Architecture (2023): Next-gen chips with 2x faster AI training and 3x better memory bandwidth (e.g., GB200 for cloud AI).

- Software Ecosystem:

- CUDA: A parallel computing platform and programming model (used by 90%+ of AI developers).

- Omniverse: For 3D/AR/VR and simulation.

- NVIDIA AI Enterprise: Tools for deploying AI in enterprise workflows.

Why NVIDIA is a Global Phenomenon

| Area | Impact |

|---|---|

| AI Dominance | Powers 95% of AI training at companies like Google, Meta, and Microsoft. |

| Data Center Growth | Reported $11B in data center revenue in 2023 (up 180% YoY). Gaming revenue is now ~$1B (down from $10B+). |

| Competition | AMD and Intel are trying to catch up, but NVIDIA's CUDA ecosystem is unmatched. |

| Stock Price | Surged to $1,000+ per share (2022–2024) due to AI demand. |

Recent Milestones

- 2023: Blackwell chips launched; NVIDIA's AI revenue hit $3B+.

- 2024: H100/A100 sales hit $5B+ (2023).

- 2023: NVIDIA's data center revenue exceeded $12B (vs. gaming at ~$1B).

- 2024: Blackwell GPUs power Google's Gemini, Microsoft's Azure, and OpenAI's infrastructure.

Criticisms & Challenges

- Cost: High-end GPUs (e.g., A100/H100) cost $30K–$50K—prohibitive for smaller businesses.

- Supply Chain: Overwhelmed by AI demand (e.g., 2022 shortage caused factory shutdowns).

- Environmental Impact: AI training consumes ~250,000 tons of CO2 annually (equivalent to 55M cars' emissions).

In Short

NVIDIA isn't just a tech company—it's the invisible engine of the AI era. Its GPUs enable everything from streaming video to training the next generation of AI models. While it started as a gaming firm, it now shapes how the world uses AI. As Jensen Huang says: "NVIDIA is not just a company—it's a movement."

If you'd like deeper details on a specific product (e.g., H100 specs), market impact, or competitors, just ask!

Setup

Build Flags

llama.cpp was compiled with the following CMake flags:

| Flag | Purpose |

|---|---|

| -DGGML_CUDA=ON | CUDA backend |

| -DGGML_CUDA_FA=ON | CUDA flash attention |

| -DGGML_CUDA_GRAPHS=ON | CUDA graph optimization |

| -DCMAKE_BUILD_TYPE=Release | Compiler optimizations |

Server Flags

Each quantization was benchmarked using llama-server with identical flags:

| Flag | Value | Purpose |

|---|---|---|

| -m | <model>.gguf | Model file |

| -c | 4096 | Context length |

| -np | 1 | Parallel slots |

| -ngl | 999 | Offload all layers to GPU |

| -fa | on | Flash attention |

| -ctk | q8_0 | KV cache key type |

| -ctv | q8_0 | KV cache value type |

| -b | 2048 | Batch size |

| -ub | 512 | Micro-batch size |

Additional Notes

The speeds aren't bad for a $250 computer! However, output quality was not assessed in this benchmark. If that is something you would like for me to compare, let me know in the comments of the corresponding Youtube video!